Inhoud

LXC (LinuX Containers) Introduction

1. Overview

1.1 What is LXC?

LXC (LinuX Containers) is an operating system level virtualization method for running multiple isolated Linux systems (containers) on a single control host.

The Linux kernel comprises cgroups for resource isolation (CPU, memory, block I/O, network, etc.) that does not require starting any virtual machines. Cgroups also provides namespace isolation to completely isolate application's view of the operating environment, including process trees, network, user ids and mounted file systems. 1)

To put it into other words: LXC is “chroot” on steroids.

A “chroot” jail provides the ablility to run programs in an isolated

Root directory environment.

Process and network environments are not isolated from the host however.

2)

LXC takes the isolation approach a big step further. LXC offers application environments completely isolated from the host and from each other.

1.2 LXC Applications

The LXC virtualization technique offers a wide spread of applications:

- Consolidation of several Linux machines on a one hardware server.

- running services in an isolated environment: webserver, database server, etc.

- Virtual Private Servers

- application sandboxes

- application appliances (e.g. Docker)3)

- developing/testing software in an isolated environment

- running a different release of a Linux distribution

- providing a different Linux distribution on the same host

1.3 LXC Limitations

LXC containers use (by nature) the Linux kernel of the host.

This limits the application environments to those which are

compatible with the hosts Linux kernel version:

- The Linux distribution release in the LXC container may differ from the host, but must be able to run under the hosts Linux kernel version.

E.g. this might be accomplished by installing a recent (backported) kernel on the host. - You cannot run a non-Linux OS in a LXC container.

(Use a VMM 4) instead: Xen, Virtual Box, KVM, etc.)

1.4 LXC Performance

Since LXC simply runs processes in another Namespace

5)

and doesn't rely on a virtual machine,

LXC containers run virtually without overhead.

The overall performance of the computer is determined by the total

load the running processes impose to the system.

Using “cgroups” (Control Groups) resource limits (RAM, CPU, etc.)

can be set on containers.

In contrast to VMM systems6), LXC doesn't waste memory due to VM 7) memory reservation. The memory footprint of a LXC container is just determined by the total memory footprint of the running processes in the container. So even on hardware with limited amount of RAM multiple LXC containers can be operated simultaneously.

1.5 LXC Security

From a security standpoint

providing unprivileged (non-Root) user accounts on LXC containers

doesn't differ from providing unprivileged accounts on the host system.

Allowing Root access to privileged (running as Root) LXC containers

is not safe however.

Under certain conditions the host system might be compromised.

8)

Starting from Linux kernel 3.14 and LXC 1.0.5 it is possible to configure and operate unprivileged LXC containers. This is accomplished by mapping LXC container “UID=0” (Root) to a non-zero (unprivileged) UID on the host.

2. LXC Networking scenarios

An important step in implementing LXC is choosing the appropriate

network scenario for the application.

This section discusses the most popular networking scenarios.

2.1 Network type "none" (no network configuration)

LXC offers several network types, amongst these a “none” type. When using the “none” network type the LXC container simply shares all the network resources with the host. This network setup type is well suited for simple tests or to run a (graphical) application in a sandbox.

- Note that since UDP/TCP ports are shared with the host the allocation of listening ports in the LXC container may conflict with those allocations in the host and vice versa.

For most applications the “none” network type is inappropriate, since the LXC container has unlimited access to the network resources of the host. There is nothing to stop the container from compromizing the hosts live network configuration. 9)

- Don't confuse the “none” setup type with “empty”.

The “empty” setup configures the loopback interface only. The container won't have any network access to/from the outside world.

- More info about LXC network types:

$ man lxc.container.conf

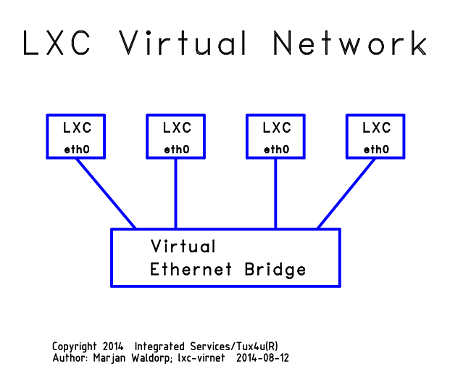

2.2 LXC Virtual Ethernet Network

For most applications the LXC network setup comprises the creation of a virtual Ethernet network centered around a virtual Ethernet bridging device. Each LXC container is linked to this Ethernet bridging device via a virtual Ethernet device pair of which one halve is located inside the container and the other halve outside the container. The outside halve of these virtual Ethernet devices are visible in the hosts network configuration (ifconfig).

There are two ways to connect the LXC virtual network to the outside world:

- Bridging

- (NAT) Routing

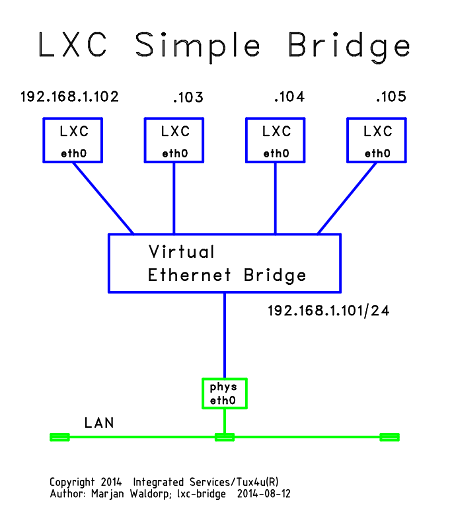

2.3 LXC Simple Bridge

In the “Bridging” scenario the Virtual Ethernet Bridge is directly

connected to the physical networking interface of the host.

Since the physical networking interface has to pass all Ethernet frames

to the according virtual LXC Ethernet interfaces, it has to operate

in “promiscuous mode”.

The hosts IP-address is configured on the virtual Ethernet Bridge.

2.4 LXC NAT Routing

In the “NAT Routing” scenario the Virtual Ethernet Bridge operates

its own (private) IP network.

All trafic to/from the outside world is routed by the Linux kernel.

Since in most cases we don't want to deal with an extra IP network,

Linux “iptables” NAT 10) rules hide

the LXC virtual network from the outside world.

2.5 Comparision of LXC "Simple Bridging" vs "NAT Routing"

| Issue | LXC Simple Bridging | LXC NAT Routing | Comment |

|---|---|---|---|

| LXC Containers visible from outside | Yes | No (hidden) | 1 |

| LXC Container IP-address | Outside IP-address | Inside IP-address | 2 |

| Outside access to LXC services | Direct | Via DNAT rules | |

| Services operate on real IP-addresses | Yes | No | 3 |

| Wireless LAN support | No | yes | 4 |

| Firewall support (Shorewall) | Complex | Host central | 5 |

- Bridging: Containers show up as separate hosts.

- Bridging: Each Container needs an outside IP-address!

- NAT Routing: Service processes may need extra configuration to make them aware of the outside world they are serving (e.g. sendmail).

- Most wireless network interfaces won't operate in promiscuous mode.

- Bridging: Firewalling is complex. Choose from:

- iptables firewalling in each Container and the host

- Host MAC layer firewalling

3. More information

| Links | |

|---|---|

| https://wiki.debian.org/LXC | Debian Wiki: LXC |

| https://www.stgraber.org/2013/12/20/lxc-1-0-blog-post-series/ | Stephane Graber's Blog post series LXC 1.0 |

| https://wiki.deimos.fr/LXC_:_Install_and_configure_the_Linux_Containers | Deimosfr: LXC: install and configure Containers |

| http://www.vislab.uq.edu.au/howto/lxc/lxcnetwork.html | UQVislab LXC networking |

| https://wiki.archlinux.org/index.php/Linux_Containers#Virtual_Network_Types | Archlinux Wiki: LCX: Virtual Network Types |

.

Copyright © 2014 Integrated Services; Tux4u.nl

Author: Marjan Waldorp; lxc/lxc-introduction 2014-08-13